Hi,

I was wondering if someone knows of any masked AES implementations that are ready-to-use on a CW target?

Thanks!

Hi,

I was wondering if someone knows of any masked AES implementations that are ready-to-use on a CW target?

Thanks!

Yes, several implementations have been contributed by @jmichel:

You’ll find them here: chipwhisperer/hardware/victims/firmware/crypto at develop · newaetech/chipwhisperer · GitHub

Thanks! But I’m having trouble cloning the repository, I get an error that it’s not found:

Does it mean I don’t have permission to clone it?

That’s not how git works, you can’t clone an arbitrary subdir of a repo.

First, clone the entire ChipWhisperer repo, if you haven’t already:

git clone https://github.com/newaetech/chipwhisperer.git

Then, cd to hardware/victims/firmware/crypto and grab the submodules you want, e.g.:

git submodule update --init Higher-Order-Masked-AES-128/

Thank you for the prompt reply, I’m quite a newbie with these things. The same goes for how I should adjust the lines for compiling and programming. I therefore need a little bit of help with how I should change these lines in order to use the masked implementation:

I’m sorry for very elementary questions, I’m just not so versed in these things yet. I appreciate your help.

As an alternative, for our research, I’m trying to keep pre-compiled firmware for all targets and all AES implementations on a github. That’s the only way I can ensure reproducibility of our results.

You can find/download all ELF + HEX files there: https://github.com/jmichelp/chipwhisperer-firmware

This way you only care about flashing the correct file.

Thank you! This was invaluable, I really appreciate it. I tried the “MASKEDAES_ANSSI” for the STM32F3 and the number of leakage points doing TVLA dropped from 16350/20000 points in the unmasked TinyAES128 implementation down to 708/20000. I was wondering if you could either explain or direct me to documents where I can read about the different implementations. In particular I am curious about the various implementations for the STM32F3, the ones named

“MASKEDAES_ANSSI”

“MASKEDAES_ANSSI+KEYSCHEDULE”

“MASKEDAES_ANSSI+UNROLLED”

“MASKEDAES_ANSSI+UNROLLED+KEYSCHEDULE”

“MBEDTLS”

I assume all are the 128-bit version?

And I assume that the “TINYAES128C” is just the unmasked version?

Thanks!

Yes they are all AES128 implementations.

MBEDTLS is basically what it says: unprotected AES implementation from MbedTLS library  . It’s faster than TinyAES but shouldn’t be much harder to attack.

. It’s faster than TinyAES but shouldn’t be much harder to attack.

MaskedAES ANSSI is the implementation from ANSSI coming from their Github account: https://github.com/ANSSI-FR/SecAESSTM32. The documentation is available there.

The keywords after the implementation are the options the firmware was compiled with:

KEYSCHEDULE will include the key scheduling as part of the trace (typically on Chipwhisperer, the trigger happens right after key scheduling). This is useful here because this implementation as the capability of also masking the key schedule. Therefore capturing it allows to assess the efficiency of the protection.UNROLLED will unroll most of the loops and therefore is faster, yielding shorter traces at the expense of requiring more flash.UNROLLED+KEYSCHEDULE means that the above 2 options were set to compile the firmware

Hi,

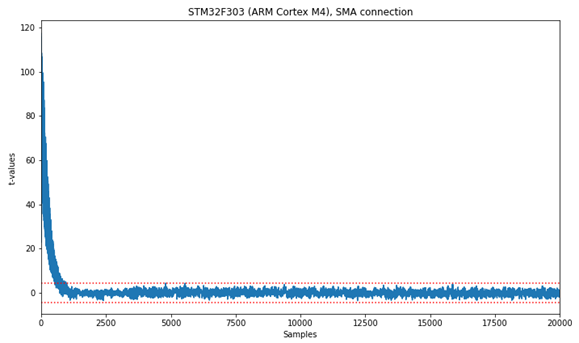

Thank you! I will look into the theory of the masked implementations a bit more. But I’m wondering, how many traces should I expect to collect for extracting the key using CPA? First I did a TVLA and there is not much leakage:

(there are 708/20000 leakage points)

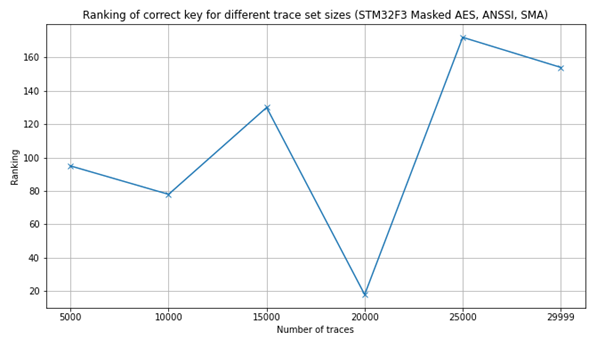

Then I did a CPA with own code, attaching only the first byte, and ended up with this, using 29999 traces (got a time-out for the first trace):

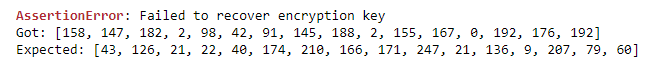

To make sure it wasn’t my own code that caused issues, I ran the data set through CW CPA tutorial and ended up with this guessed key compared to the actual key:

I’m just wondering what I should expect, and what experience you have with this. I’ve used the regular SMA connection for collecting the traces (I’m planning on running the dataset through some machine learning code, but haven’t had time yet).

Thanks!

Here you can find the published paper by ANSSI team about how they attacked their own implementation: https://eprint.iacr.org/2021/592.pdf

Basically this is the kind of implementation that you attack with template attacks. CPA shouldn’t be able to recover the key.

We’re using this implementation in our own research to study how much more efficient deep learning is against protected implementations (see: https://elie.net/blog/security/hacker-guide-to-deep-learning-side-channel-attacks-the-theory/ and the links to our 2 presentations which are contained in this blog post).

Hi!

Thank you. That’s sort of relieving that I wasn’t supposed to be able to extract the key with CPA. I thought I had blundered in some way. I actually read the blog post and watched your presentation earlier this week. Very interesting stuff. I’m very new to machine learning and deep learning (just started looking into it a few weeks ago), and at the moment I find it quite overwhelming, but on the other hand I also find it quite intriguing how it can be used for SCA.

I’ve been trying to play around with Guassian Naive Bayes, SVM, MLP, and Random Forest, but I find the results to be fluctuating and diverging very easily depending on the parameters I choose. Would you say that those techniques are insufficient to break the ANSSI implementation, and that stronger deep learning methods is the way to go, or should I be able to extract the key with simpler machine learning algorithms?

It’s rather hard to give a clear and definitive answer here as this is an ongoing research field.

In our research we decided to have everything automated and therefore to use complex models which are able to process the traces of the full AES encryption (all 10 rounds. Other researchers use much smaller machine learning models after pre-processing the traces.

Hi,

Thank you. I just have two follow-up questions:

In the TVLA plot I provided above, why is there such a big “tail” at the beginning, about the first 1000 samples?

What is the reason why CPA does not work on that masked implementation, but that deep learning methods work?

Thanks!

@jmichel I am trying to use the XMEGA microcontroller to run the masked implementation, so after visiting your built firmware Github I am not sure which one to select for XMEGA, since for CW303 I don’t see any masked AES implementation. I 've also tried the git submodule update --init Higher-Order-Masked-AES-128 but it doesn’t seem to even work , it cannot find the git sub-module. On the other hand the proposed technique by @Alex_Dewar [quote=“Alex_Dewar, post:2, topic:2166”]

chipwhisperer/hardware/victims/firmware/crypto/ via bash/git bashgit submodule update --init secAES-ATmega8515 (at least this submodule updated worked)CRYPTO_TARGET=MASKEDAESAlex

[/quote]

Doesn’t seem to work for me

even when I add CRYPTO_OPTIONS

.Any help would be really lifesaving(lol)

Weird, it seems like some of the masked aes submodules got removed at some point. I’ll get those added again. Regarding the unsupported platform/hal, it looks like the makefile only looks for Arm devices and the AVR in particular and not the XMEGA. I think this is just an oversight from when Arm support was added, but I’m not 100% sure. Try going to hardware/victims/firmware/crypto/Makefile.maskedaes and changing else ifeq ($(HAL),avr) to else ifeq ($(HAL),$(filter $(HAL), avr xmega)) on line 42.

Alex

Hi,

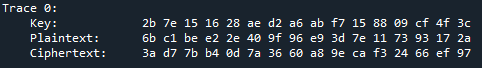

I got the ANSSI masked AES to work on my CW308 STM32F303 board by using

PLATFORM = 'CW308_STM32F3'

CRYPTO_TARGET = 'MASKEDAES'

CRYPTO_OPTIONS = 'ANSSI'

I did a simple encryption test, and it works:

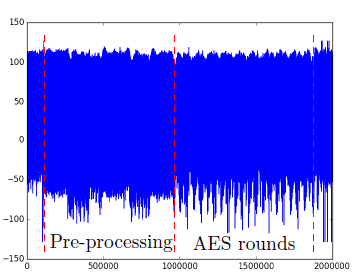

From ANSSI-FR’s GitHub repository, they present this plot at the very end:

To me this becomes an issue when using the CW Lite for data collection, as it can only buffer 24400 samples (?) Also, where in what code would I have to make changes if I wanted the CW trigger to start when the “AES rounds” in the above figure start? After all, I just need the trace from the beginning of the first round to try an Sbox output HW attack with CPA, and possibly a template attack.

Are there any other CW masking options that would perhaps fit better the sample limitations of the CW Lite? (just for learning, not necessarily the absolute best masking scheme) I tried using CRYPTO_OPTIONS = 'KNARFRANK' and CRYPTO_OPTIONS = 'RIOUBSAES', but then I get compilation errors. For example, for the latter, this is an excerpt:

Thanks!

Yeah with the CW-lite you have a maximum of 24400 samples.

If you move up to CW-pro you’ll get up to 98K samples, or unlimited samples with streaming if your sampling clock isn’t too high (max 10 MS/s). Also CW-Husky, which will be launched soon, will have similar sampling specs to the pro as far as sampling is concerned.

I wouldn’t necessarily rule out getting some results from that target with CW-lite.

I haven’t read their full paper but that plot actually comes from clocking the target at 4 MHz and getting traces from an external oscilloscope at 100 MHz. That plot is about 2M samples, so it’s actually 2M/25 = 80000 clock cycles, so not too far from CW’s capacity if you sample at x1.

There are a few things you can do:

scope.adc.decimate

scope.adc.offset (capture the first 24.4K samples with scope.adc.offset=0, the next 24.4K samples with scope.adc.offset=24.4K, etc…)scope.adc.offset to capture only what you need.Jean-Pierre

Hi Jean-Pierre,

Thank you for the tips. Just to see if I could locate the AES rounds, I set the ADC settings to the following:

scope.adc.decimate = 3

scope.adc.samples = 24000

scope.clock.adc_src = 'clkgen_x1'

scope.adc.offset = 0

and got this plot:

Is it safe to assume that what I’ve marked in red are the 10 AES rounds? It leads me to another question: how much performance gain would one expect when trying to do an attack if you have 1 sample per clock cycle (clkgen_x1) versus 4 samples per clock cycle (clkgen_x4)?

1- Based on the authors’ own power trace, I would expect that to be the pre-processing. One thing I forgot to mention is to use scope.adc.trig_count to lean how many samples the trigger line was high - it looks like you’re missing part of the operation here. There is a known bug here so you need to use scope.adc.trig_count as shown here: scope.adc.trig_count sometimes doesn't get reset · Issue #351 · newaetech/chipwhisperer · GitHub

To know for sure where the AES rounds are, you can move around the trigger_high() call in the firmware source. Or, better yet, if you have a PhyWhisperer-USB, you can run TraceWhisperer on it to get markers when specific instructions are executed.

2- There’s no simple answer to x1 vs x4, but Colin did a detailed study of this: https://eprint.iacr.org/2013/294.pdf. TL,DR: x1 sampling works very well!

Hi,

Thank you for the reply. I didn’t get the scope.adc.trig_count to work. I did what the bug workaround described to do:

initial_trig_count = scope.adc.trig_count

trace = cw.capture_trace(scope, target, text, key)

actual_trig_count = scope.adc.trig_count - initial_trig_count

but it just got evaluated to 0.0.

Also, regarding the pre-processing part. In my compilation arguments, I only use CRYPTO_OPTIONS = 'ANSSI', and not KEYSCHEDULE, and from what is described in e.g. Makefile.maskedaes:

# For example, CRYPTO_OPTIONS=ANSSI+UNROLLED+KEYSCHEDULE will compile

# a firmware using ANSSI's implementation of masked AES for Cortex-M4 devices

# with unrolled loops and the keyschedule will be part of the capture.

I would assume that the encryption itself would start shortly after the trigger? In which code file would I have to move the trigger_high() command around to test (btw, I don’t have the PhyWhisperer, only CW Lite)? I am a bit confused as to what files to look in, I’m quite inexperienced with changing these C files.

Thanks!